Capture One is a must-have software for professional photographers, ideal for studio work, particularly in fashion, commercial, product and portrait photography.

Summary

Collaboration in studio setups was really limited for professional photographers and their teams. During Covid, the need for remote collaboration emerged and Capture One delivered a magical experience of sharing photos online during a shoot with CO Live. When covid ended, the need for CO Live decreased and we were back at limited collaboration in studio setups.

I was part of an ambitious project to redesign the way professional photographers collaborate with their teams during studio shoots.

To comply with my non-disclosure agreement, I have obfuscated and omitted from this case study anything confidential. All information is my own and does not necessarily reflect Capture One.

My Role

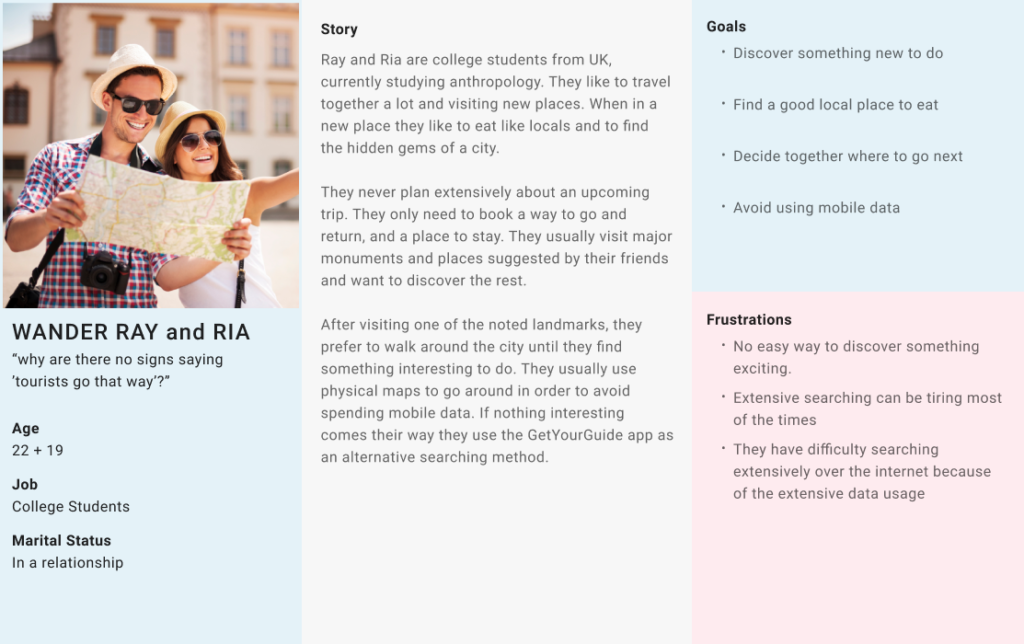

At Capture One I was responsible designing for smooth collaboration between professional photographers, their team members and their clients across their whole workflow.

I led the design of a real-time photo sharing experience, called Capture One Live for Studio, as a solo product designer.

I worked together with a Researcher, Engineering Manager, Product Manager to discover the opportunities and establish our business goals and with a small team of 3 engineers during development.

The project started on Oct 2023 and the app launched on May 2024

Problem Statement

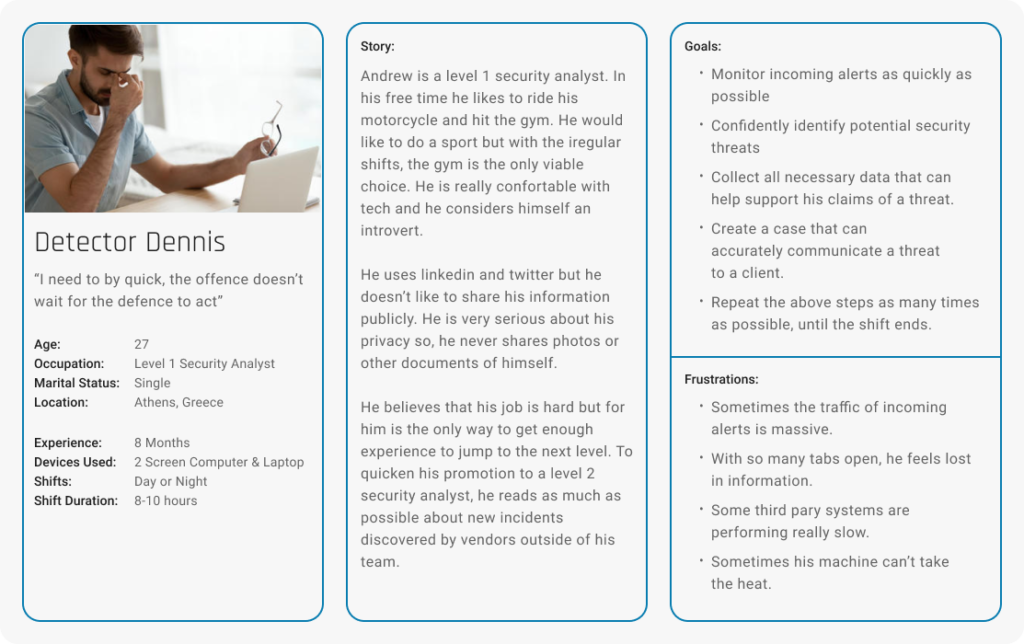

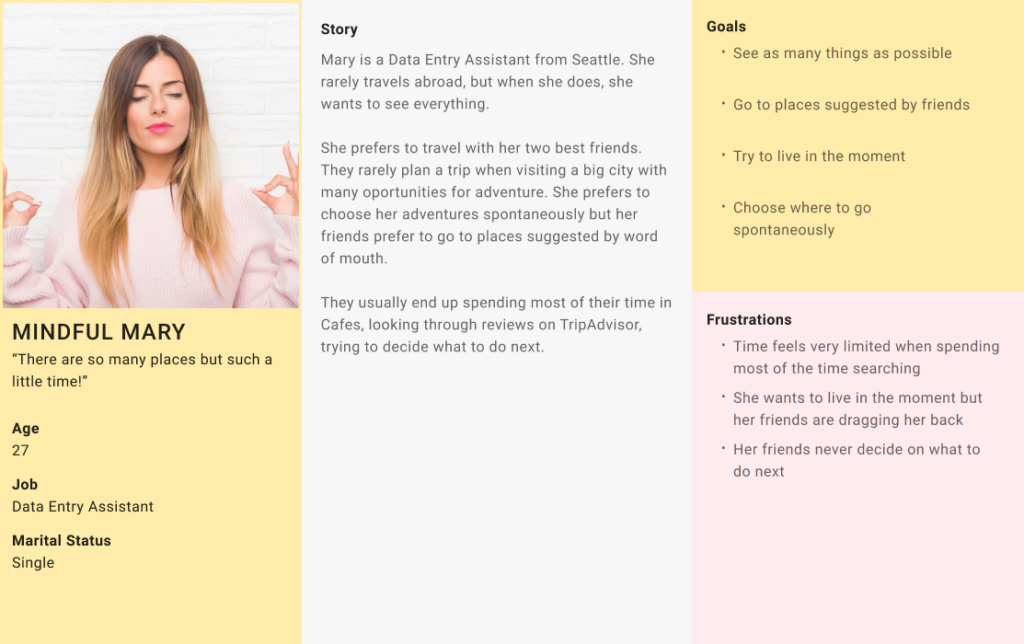

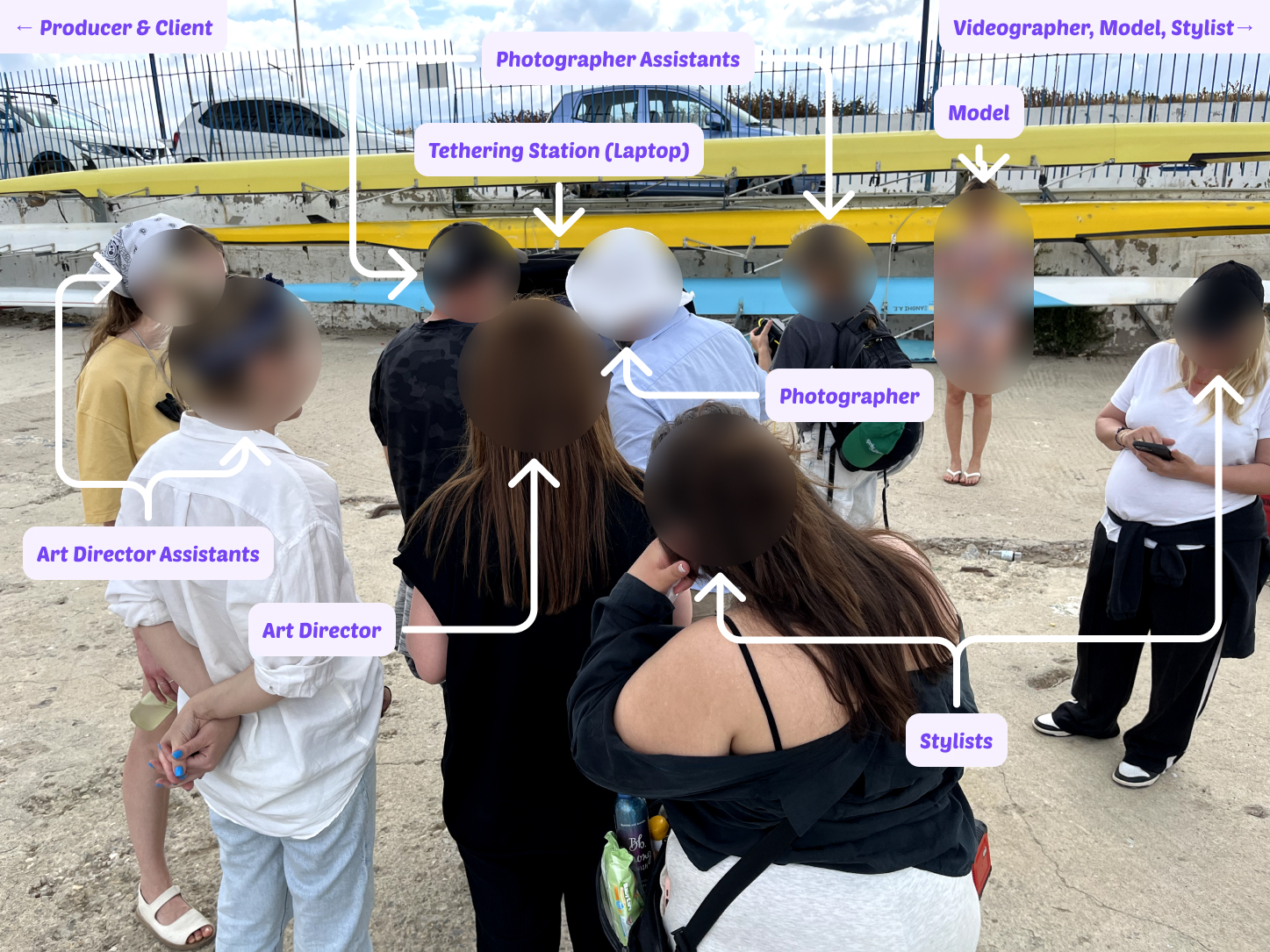

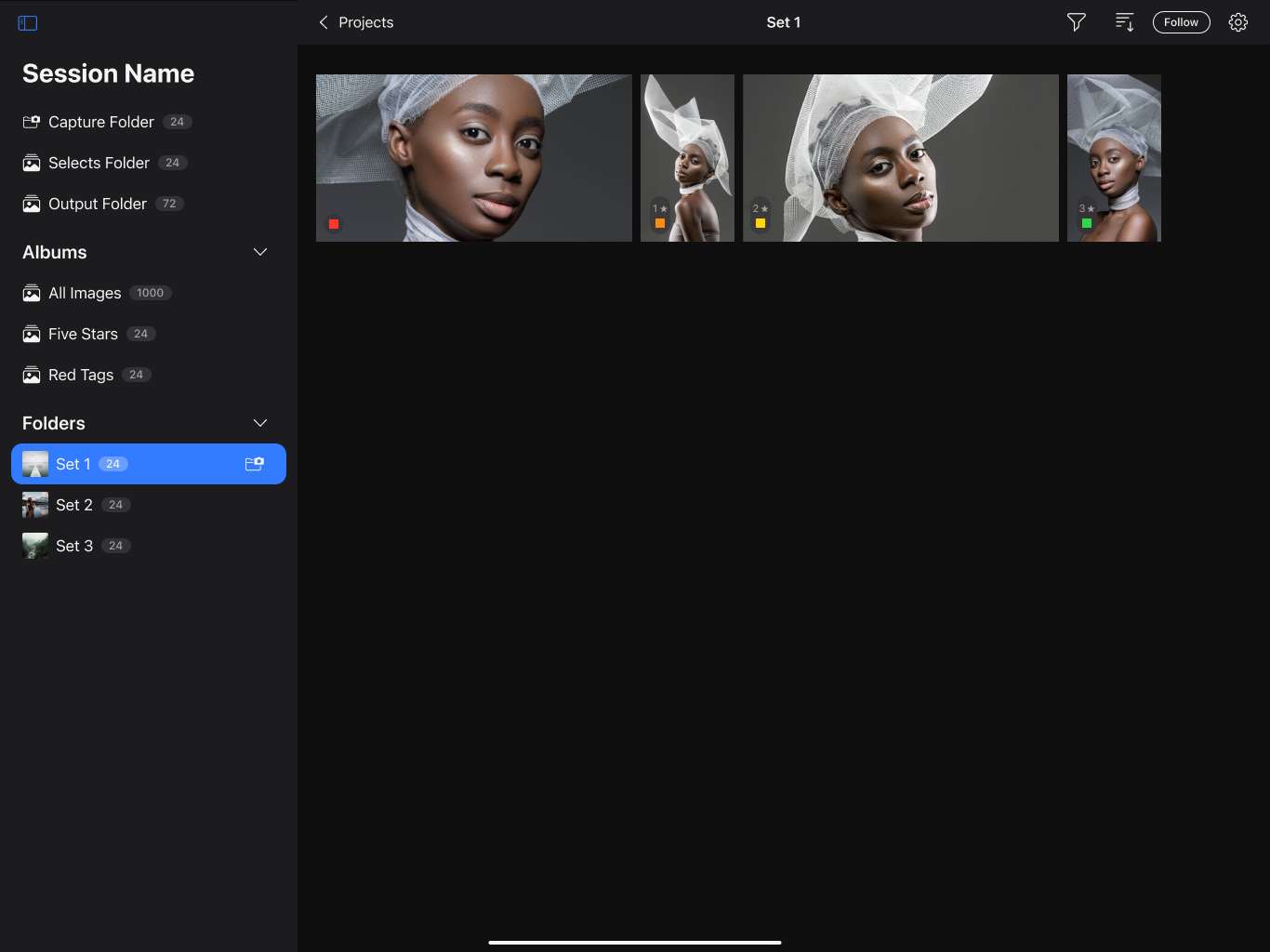

Capture One always had a functionality called “tethering”, which allowed photographers connecting their camera to their laptop and, while shooting, the photos were immediately transferred there. While this works great for small teams, it is not ideal for larger teams. In bigger productions, a team can consist of 10 or even more people, making the laptop a very crowdy place to be. (Image 1)

Back in 2019, when Covid started, the need for remote collaboration emerged. Capture One released CO Live, a new experience where professional photographers would be able to share photos online, during a shoot. CO Live transformed the way photographers would collaborate remotely. However, when covid ended and all collaborators returned on premise, CO Live struggled supporting collaboration in the fast paced environment of a studio photoshoot.

Challenge

Our goal for this project was to elevate the way larger teams would collaborate during a shoot. The premise was: the photographer takes a photo, the assistant edits it on the tethering device, the team reviews on a mobile device.

Our high level goals were to make something:

- Fast: Make it render high quality photos fast.

- Easy of Use: Make it easy for everyone.

- Stable: Ensure that it is reliable at all times.

- Pressure free: Ensure that we decrease the number of eyes from the tethering device.

Research and Discovery

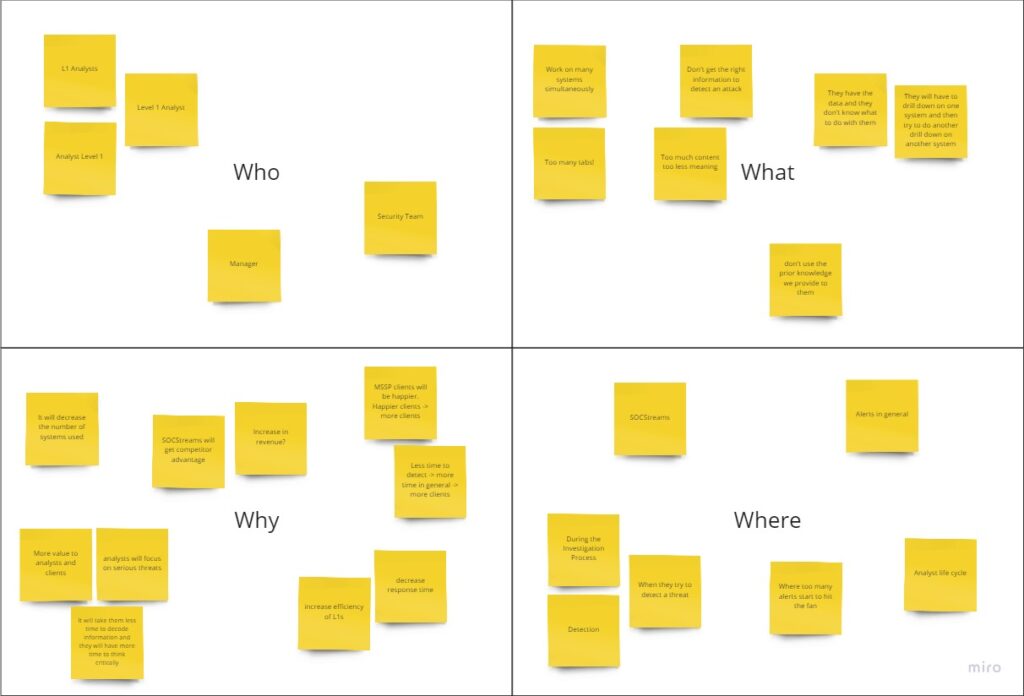

With the help of our researcher Andreas, in Capture One, we established a continuous research approach, where everyone from the design team gets to observe and record field studies. This allowed us to start with a good understanding of the situation during a shoot while I also conducted interviews, to find out more targeted nuances and pain points.

Combining the pre-existing insights from field studies, together with the followup interviews, my team was able to have a clear mission and specific goals for our project.

What our research showed was that in each shoot, the Art Director is the one that reviews the photos and makes sure that “they have the shot”. What we observed is that there are 2 use cases in each shoot, where the Art Director needs to check the photos.

1. Passive Observation

The Art Director wants to observe the shoot and ensure that things are going according to plan and the feedback towards the photographer and the model shows on the photos.

2. Active Review

The Art Director wants to review previous photos from the current or past sets and select the best ones, while the shoot keeps going.

With the current way of collaboration available, the following problems surface.

- CO Live is unusable in cases where the internet connectivity is limited, making the transfer of photos very slow. Creating huge delays in the 1st use case. If internet is non-existent, it also doesn’t allow for the 2nd use case to take place as well.

- The Art Director can only see what the assistant sees, making it difficult for the assistant to work on a specific photo while the Art Director wants to review previous photos. This creates a bottleneck in the 2nd use Case.

- Many photographers had created workarounds to support a local collaboration flow, via screen sharing apps, to reduce the number of people looking at their screen while they work. However, people would still prefer to look at the laptop where the screen was bigger and the action was happening.

Design Process

User Flow Information

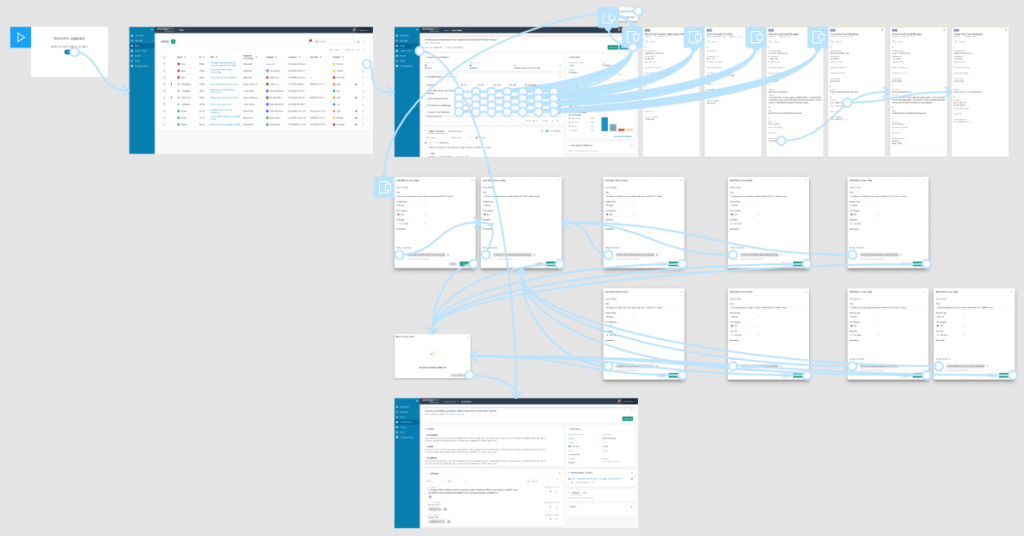

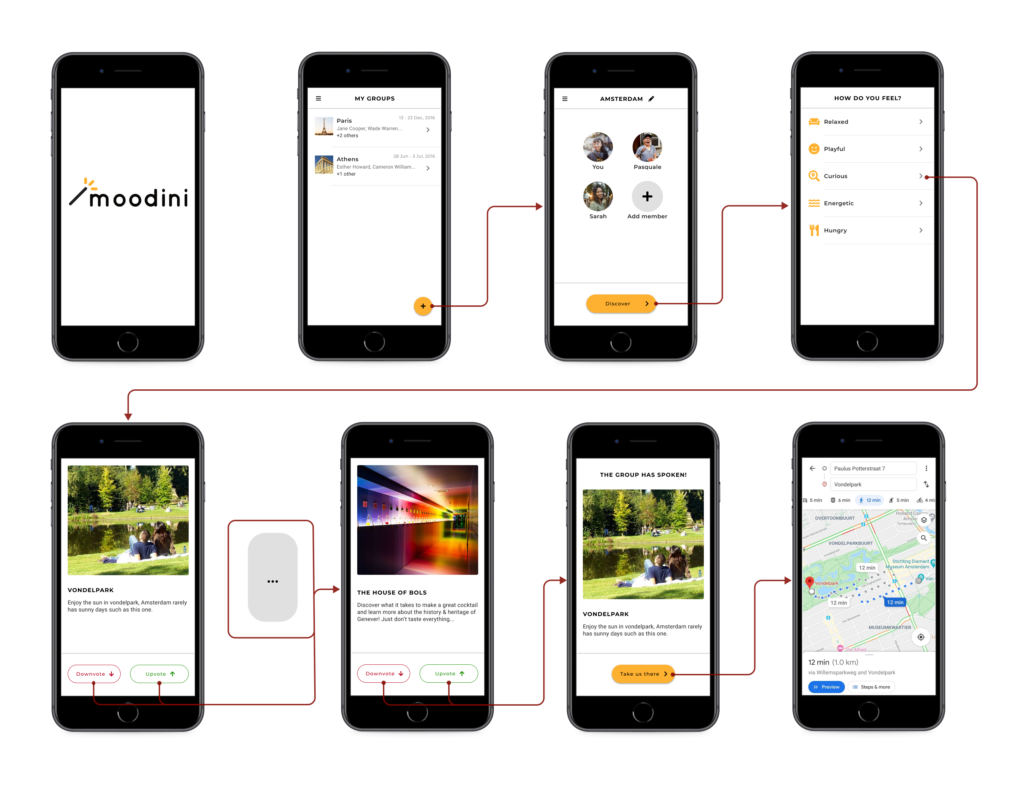

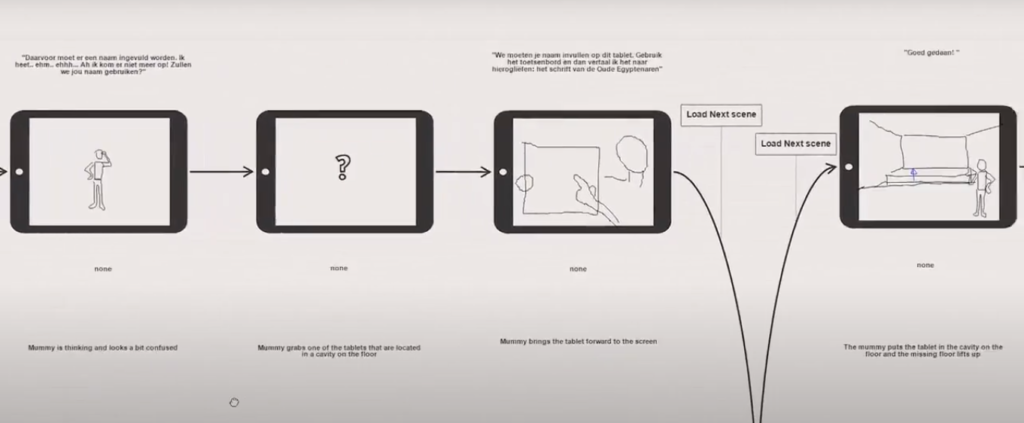

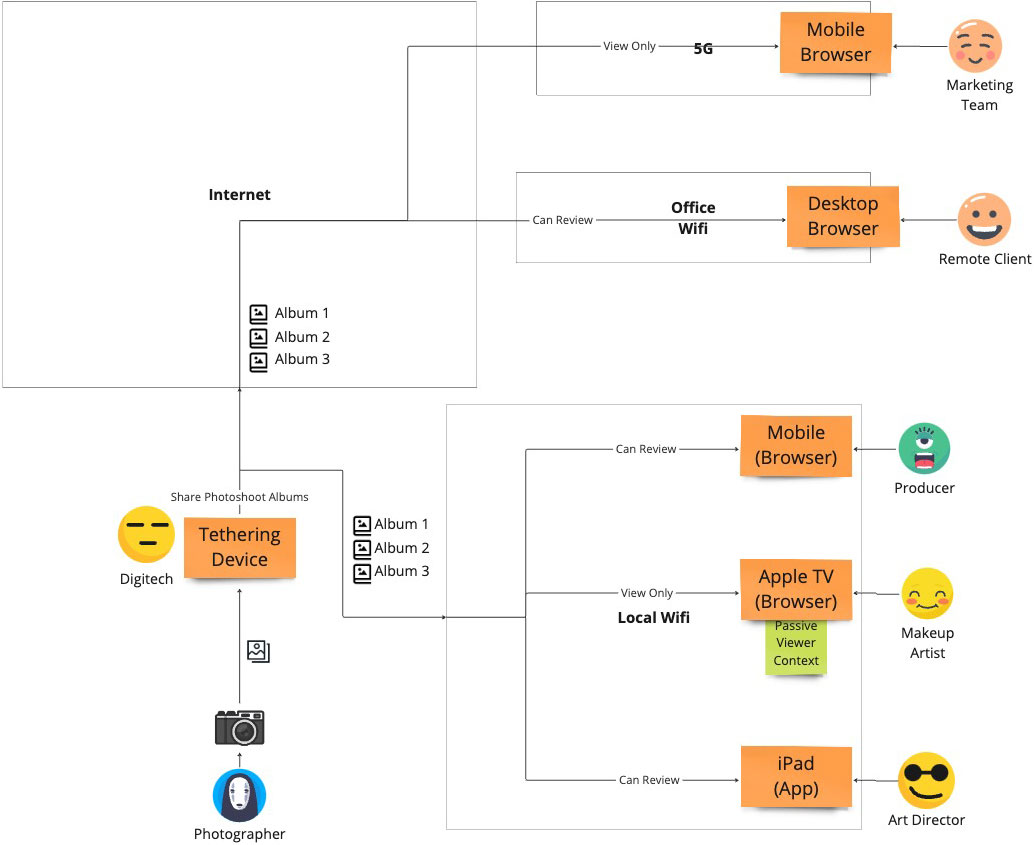

I designed the flow (Image 3) based on the larger vision of where we wanted this collaboration experience to go. The idea was that everyone can access the shoot from any device and any network, allowing for both local and online collaboration at the same time. Merging the new experience with the existing experience of CO Live.

However, for the first iteration of the product we adopted a much simpler approach that allowed collaboration to occur either online or locally and only from an iPad.

We named the app “CO Live for Studio”, with the intention of merging it with “CO Live” in a future iteration.

We chose to start with only an iPad app because, interestingly enough:

- at least one iPad is found in most studio setups

- its screen is large enough to increase the confidence of Art Directors when reviewing the photos.

Design Prototypes

I designed CO Live for Studio having both use cases in mind.

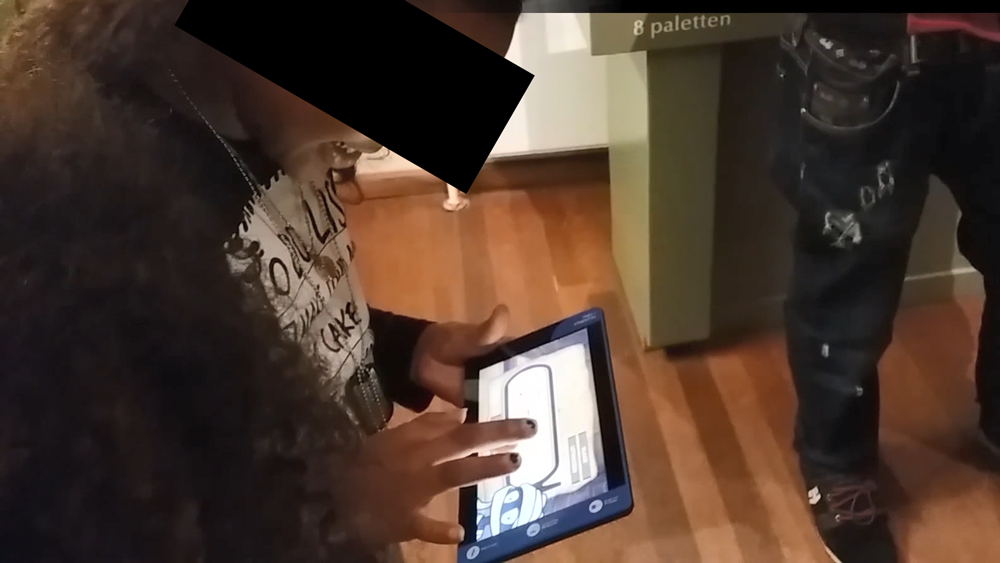

- Passive Observation: I designed an experience that allows for a passive “follow” mode, following the shoot as it happens, with 0 clicks. Much like tethering transfers a photo from the camera to the laptop, the laptop transfers it to the mobile device via the local network, even if the local network has no internet access at all (Image 4).

- Active Review: The photographer decides, which albums can be seen on the iPad app. Then the Art Director can freely move between the photos of any shared set (Image 5).

For the UI and interaction, I used the same patterns I had designed back in 2022 for the “Capture One mobile” app. Both “Capture One mobile” and “Live for studio” had a similar visual experience, so we had already worked and tested these areas a lot during these past 2 years. An added bonus was that we got to maintain consistency.

Testing

We tested the app using a working prototype made by our team. With the help of our colleagues in Copenhagen, we distributed a closed beta to a select group of studio photographers for testing.

I recommended this approach for 2 key reasons:

- The app’s nature: This app relies on a local network to transfer photos between devices. If you add extra factors such as the fast-paced environment of a photoshoot, the pressure to deliver results, and the presence of numerous people, testing it differently creates huge challenge in both result accuracy and practicality.

- High confidence: Our extensive research gave us strong confidence and deep insights into the collaboration related problems happening during a studio shoot. As an extra plus, this accelerated the delivery as well.

Outcomes and Reflection

On May, 2024 the CO Live for Studio app was released as part of bigger launch with the goal of supporting the studio workflow—an impressive achievement by the team, considering that it was a total redesign in the way collaboration happens in studios in only 6 months.

Around 40% of all studio users use Live for studio daily, with an average duration of 2 hours, making it one of the top studio workflow tools Capture One released and the main driver for Studio subscriptions.

I'm excited to use features like Live for Studio and Client Multi-Viewer, making image sharing and receiving feedback from clients and creatives much easier.

Brian F.

First off, can I just say thank you for all your efforts on Studio - particularly with the new Live iPad app - I ran it properly for the first time on a big shoot last week and it ran almost completely flawlessly across 3 iPads over 4 days. Excellent stuff.

Ben C.

The new Live for Studio app adds a reliable and efficient way to collaborate with art directors and clients.

Laimonas S.