SOCStreams is one of the most established cyber-security incident response systems for managed service security providers (MSSP) in Europe and Middle East.

Summary

Cybersecurity analysts more often than not need to categorise hundreds of incidents between false positives and real threats. When SOCStreams provided a large amount of information to analysts, it failed to provide the important information based on the type of the incident, driving analysts away from it, using different platforms to fulfil that need. In return, analysts were loosing time moving between different platforms to find what they were looking for.

I was part of a project to increase the confidence of analysts when investigating the severity of an incident within the platform.

To comply with my non-disclosure agreement, I have obfuscated and omitted from this case study anything confidential. All information is my own and does not necessarily reflect Capture One.

My Role

I led the initial user research and defined the key personas, I created the interaction design of our prototyped solution, and I helped evaluating our design through a series of usability tests I conducted.

Solution

We based our strategy on the design thinking methodology, which includes 5 steps: empathize, define the problem, ideate, prototype and testing. We eventually designed a solution that allowed analysts to understand quicker the nature of incoming threat alerts.

Empathize

To be able to frame an analyst’s point of view I conducted an 8-hour field research with a security team of 9 members (1 Manager, 1 level-3, 4 level-2, 3 level-1 analysts) and conducted interviews with 9 level-1 and 5 level-2 analysts. The field research would allow me to see in person what a security team’s day-to-day job consists of, which are their goals and what their pain points. The Interviews would provide me additional in-depth context of who analysts are and how they feel and think.

In addition, we invited a level-2 analyst to showcase us the process followed during threat detection, investigation, and communication with the MSSP’s clients. We decided to invite a level-2 because he was responsible in training level-1 analysts. The showcasing helped my team and I to deeper understand the process an analyst would follow during an actual threat.

Personas

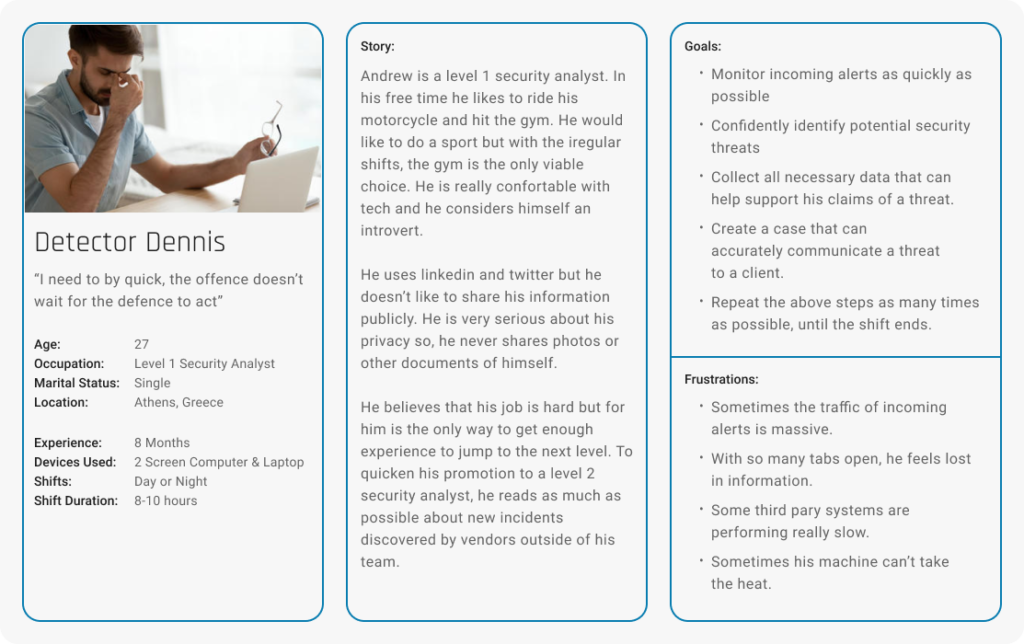

Based on the data collected during the field research and the interviews, I created 2 key personas that would help us maintain focus on the real challenges analysts overcome during the detection process of a threat.

The 2 personas were:

- Detector Dennis

- Expert Evan

Detector Dennis is part of the level-1 analyst team. He is responsible for going through the stream of incoming Alerts, distinguish potential threats from false positives, and building a case using various indicators of compromise. If the threat is too complicated for him to handle, he will escalate it to a level-2 analyst.

Expert Evan is a level-2 analyst. Among other things, he is mostly responsible in supporting level-1 analysts with difficult cases and communicating all cases created by level-1 analysts to the MSSP clients. He is also responsible in collecting all lessons learned during a case and translating them into use cases and playbooks.

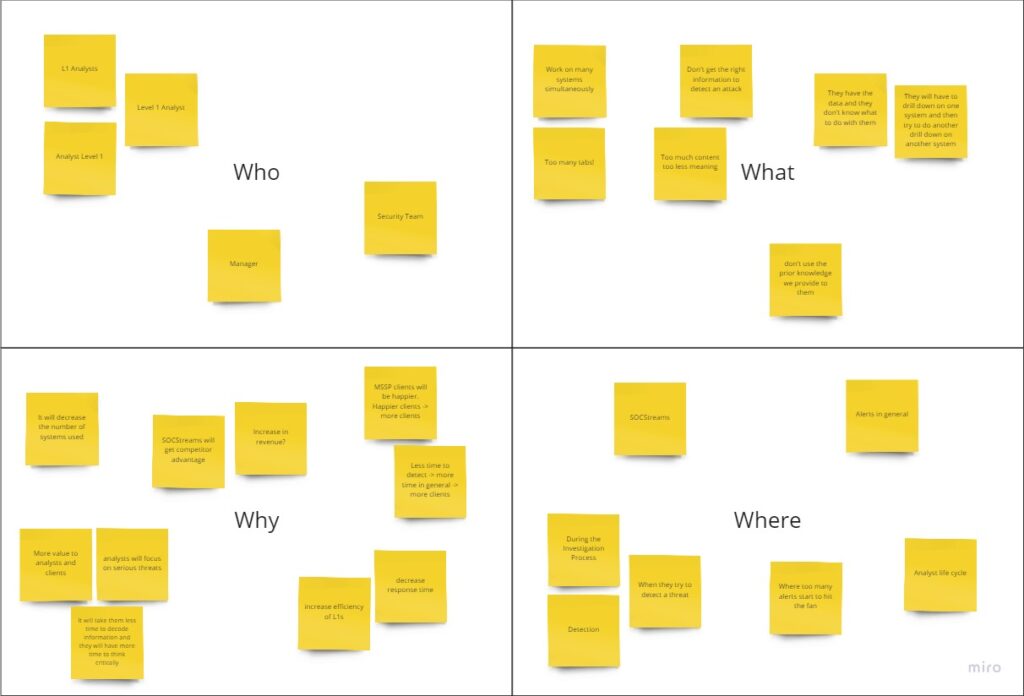

Define the Challenge

In general, threat detection and investigation is a time consuming process during which an analyst has to go through a huge stream of incoming alerts,distinguish potential threats, Scout them further to exclude any false positives, and investigate positive threats further to create a Case. Some of the greatest challenges we had to consider were:

- SOCStreams alerts provided all the appropriate indicators an analyst would need to build a case. However, it didn’t provide enough context to help analysts detect threats.

- Analysts would use both alert indicators and prior knowledge to detect a threat.

- Analysts would usually use past alerts or closed cases as prior knowledge, and not as much use cases or playbooks.

- Different indicators might have different importance depending on the threat at hand.

- External investigation systems provide a great amount of details for both detection and investigation. However, our product mission resolves around threat detection, casing, archiving and communication.

Solving these challenges would help us increase the usage of alert section. So, how might we provide analysts with enough context in order to support quicker threat detection?

Ideate

Our solution was based on the assumption that the analyst would still use external investigation systems to further investigate positive threats. However, most incoming alerts are false positives or non-issues and only a few are incidents.Thus, providing analysts with enough indicators in combination with the knowledge of past alerts and cases would help analysts detect a positive issue. This would ultimately decrease the need to use external investigation systems for most alerts.

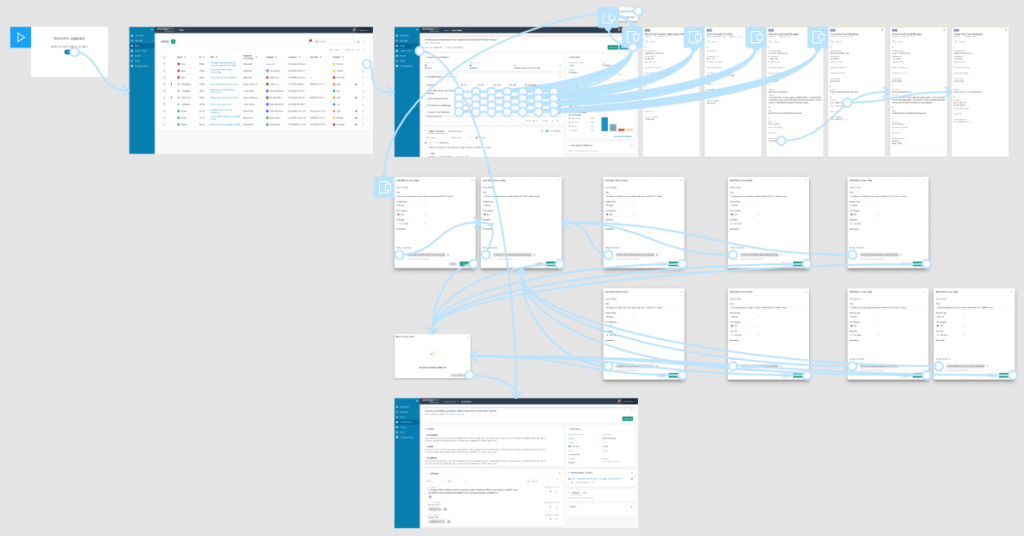

Prototype

I created an interactive prototype in Figma using our SOCStreams components. With the help of George, an awesome security expert on our company, we managed to populate our prototype with near real data of threats that he generated for the needs of prototype testing. That would help us test our idea in a situation that tries to mimic a real life situation both in the field of data and aesthetics.

Test and Evaluate

In total, I conducted 5 usability tests using the System Usability Scale for measurement. 2 with level-1 analysts, 3 with level-2 analysts that were promoted from level-1 during the last month. That helped me analyze how the solution affects the decision making process of both experienced and inexperienced analysts and also provided me with numerical data that could help me support my claims to the other stakeholders that were not present during testing.

In our testing, we validated our assumption that analysts would eventually need to use investigation systems during their investigation drill down. We also confirmed my assumption that analysts will be able to detect a cyber-attack or a false positive, before having to use an investigation system. This would eventually allow them to cut down on the extensive use investigation systems limiting it to only when necessary, and ultimately decreasing the fragmentation of information input from various sources.

Conclusion

Framing a point of view based on such a niece group of users was a great challenge on its own. I learned that I can solve this challenge by addressing a problem through the eyes of a novice analyst. During the various process cycles, I never stopped looking for meaning in the actions of analysts. I would watch videos of how to setup an investigation system to get meaningful alerts, how to detect specific threats, and what to do to contain them. This perception of things allowed me to immerse in the job of an analyst so I could come really close with the real challenges they face.

I also learned that to solve great problems you need to consider the superpowers of people outside of your general scope. If I never considered to ask the help of our cyber-security specialist, I wouldn’t be able to test the prototype with near real data. That could lead to a path where the usability results would have contained somewhat misleading results, ultimately prohibiting us from focusing on the important feedback that we received.

Iterative user research led us to a solution that seems to provide enough context and content to allow analysts get a good understanding of what an incident is about. Based on the System Usability Scale results, participants evaluated the solution at 70 points. That means that while the solution can benefit them in their work, it can still grow in the future to provide more value. We are currently on the process of developing a beta version in order to collect quantitative data and see how analysts respond to the redesigned section, under day-to-day stress. This will allow me to work on improving its interaction elements, based on tracking and continuous feedback.

For a first evaluation of an alert, this feature seems useful. You can definitely get an idea of what is going on, and it has nothing to do with the previous design for sure!

Participant C

It does get you a large percentage of the information I’ll need to draw conclusions, I would say about 80%. Now, I have to say that this info is good enough to get a first taste of what is going on.

Participant D